Fix site structure before chasing more keywords

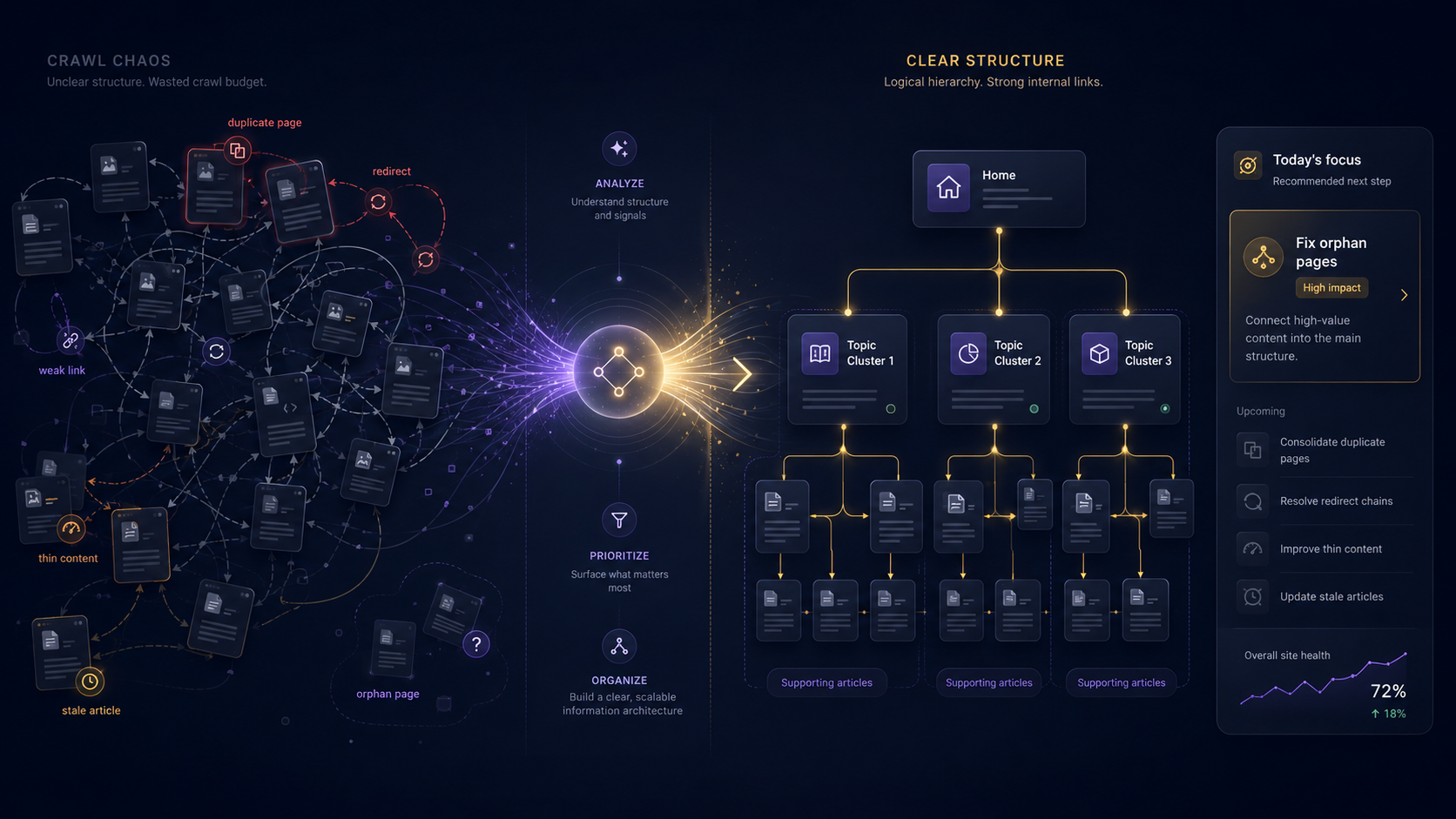

Most messy websites do not need more keyword research first. They need clearer structure, cleaner crawl paths, stronger internal links, and better prioritization.

A lot of SEO projects start the same way.

Traffic slows down a little. Leads flatten. Someone opens a keyword tool. A giant spreadsheet appears. And suddenly the plan is:

“We need more content.”

Sometimes that is true.

But on messy websites, publishing more pages often makes the situation worse before it makes anything better.

Because the problem is not always missing keywords.

Sometimes the website itself has become difficult to understand.

Not just for Google. For people too.

You can usually feel it before you fully diagnose it:

- pages overlap,

- navigation feels inconsistent,

- old content still ranks for things it should not,

- important pages are buried,

- internal links reflect decisions nobody remembers making,

- and every SEO conversation turns into another list of disconnected tasks.

At that point, keyword expansion is usually not the first priority.

Clarity is.

Most messy websites are carrying structural debt

This is one of the least glamorous parts of SEO, which is probably why so many teams avoid it.

Websites accumulate history.

Over time they collect:

- old landing pages,

- campaign URLs,

- duplicate articles,

- weak category pages,

- abandoned content experiments,

- outdated redirects,

- thin service pages,

- archive clutter,

- and navigation decisions tied to previous versions of the business.

None of these things are catastrophic individually.

The problem is cumulative.

Eventually the site stops feeling like a coherent system and starts feeling like layers of unrelated decisions stacked on top of each other.

That affects more than rankings.

It affects:

- crawl efficiency,

- internal linking,

- topic understanding,

- user trust,

- maintenance,

- reporting,

- and content quality overall.

This is why many websites do not need another content sprint first.

They need editorial cleanup and structural simplification.

Keywords cannot solve a confused website

Keyword research is useful. Very useful.

It helps you understand:

- demand,

- language patterns,

- user intent,

- adjacent topics,

- and opportunities.

But keyword tools cannot fix structural confusion.

They do not solve:

- weak internal links,

- overlapping pages,

- duplicate intent,

- buried content,

- inconsistent canonicals,

- vague page hierarchy,

- or unclear navigation paths.

And this is where a lot of SEO work quietly breaks down.

Teams keep adding pages because the keyword opportunities look real, while ignoring the fact that the existing structure already struggles to explain:

- which pages matter most,

- how topics connect,

- and where users should go next.

More content layered onto weak structure usually creates more noise, not more authority.

The problem often hides behind “we need more traffic”

A page underperforms.

The immediate assumption becomes:

“We targeted the wrong keyword.”

Maybe.

But maybe:

- the page is too deep in the site,

- internal links barely support it,

- three other pages compete for the same intent,

- the title is too generic,

- the content never answers the real question clearly,

- or Google simply does not understand which version of the topic your site actually considers important.

Those are structural problems.

And they are incredibly common.

One reason SEO feels chaotic for many businesses is that symptoms are often mistaken for causes.

Low clicks are treated as a keyword problem. Indexing issues are treated as isolated technical problems. Content decay is treated as a publishing problem.

Meanwhile, the underlying structure remains messy.

Google still needs websites to explain themselves clearly

Google’s documentation around crawling, indexing, links, and canonicalization consistently points back to the same core idea: websites should help search systems understand what pages exist, how they relate, and which ones matter most.

That sounds obvious. But messy websites usually fail at exactly that.

Important pages become hard to discover. Old pages stay indexed long after they should have been consolidated. Internal links reflect outdated priorities. Multiple pages drift toward the same intent. Topic relationships become fuzzy.

At that point, the site stops communicating clearly.

And honestly, users notice this too.

People may not understand canonicalization or crawl depth, but they absolutely notice when:

- navigation feels confusing,

- articles repeat each other,

- pages seem outdated,

- or they cannot tell which page is the “main” answer.

Good structure improves comprehension for everyone.

Internal links are usually more important than another article

This is probably one of the most underrated SEO realities.

Many websites already have enough content to perform better than they currently do.

The problem is that the content is poorly connected.

Important pages may:

- receive almost no internal links,

- sit several clicks deep,

- lack contextual support,

- or exist without any clear topic hub around them.

Meanwhile, old blog posts keep linking to outdated pages or passing users through redirect chains created years ago.

A website teaches search engines how its topics connect through internal links.

It also teaches users:

- what matters,

- what is related,

- and where to go next.

Weak internal linking makes even strong content harder to interpret.

This is why structure work often produces more meaningful SEO gains than publishing another weak article targeting a slightly different keyword variation.

Crawl data reveals patterns keyword tools cannot see

One reason crawl analysis matters so much is that it exposes systemic issues instead of isolated metrics.

Keyword tools mostly show opportunity.

Crawls show reality.

A crawl can quickly reveal patterns like:

- duplicate titles,

- orphan pages,

- redirected internal links,

- inconsistent canonicals,

- thin content clusters,

- buried commercial pages,

- broken navigation paths,

- weak heading structures,

- or content groups competing for the same intent.

Usually the issue is not one catastrophic error.

It is accumulation.

Messy websites are often suffering from dozens of small structural problems reinforcing each other quietly over time.

That is much harder to see if your workflow starts and ends with keyword research.

Search Console tells you where the friction already exists

This is where Search Console becomes incredibly useful when paired with crawl analysis.

Search Console shows:

- which pages already receive impressions,

- which queries Google associates with the site,

- where clicks are weak,

- and where visibility patterns are changing.

That context matters.

Because not every crawl issue deserves equal urgency.

A missing meta description on a low-value archive page is not the same thing as weak structure around a commercial page already receiving impressions for valuable searches.

This is where prioritization becomes more important than issue volume.

Good SEO work is rarely:

“Fix everything.”

It is:

“Fix the things that matter most first.”

Most businesses do not need another giant audit spreadsheet

This is another uncomfortable truth in SEO.

A 200-page audit rarely solves chaos. Sometimes it increases it.

When teams receive giant lists of disconnected issues, the result is often:

- paralysis,

- random fixes,

- or endless backlog accumulation.

What most businesses actually need is focus.

One meaningful next step.

For example:

- consolidating overlapping pages,

- fixing internal links around an important topic,

- refreshing a declining article that already has visibility,

- improving navigation to commercial pages,

- or clarifying the structure around one confusing topic cluster.

That is actionable.

That is manageable.

And honestly, that is how messy websites improve in the real world: incrementally.

Structure work is often smaller than people expect

The phrase “site structure” sounds intimidating because people associate it with redesigns and migrations.

But many useful improvements are surprisingly practical:

- merging duplicate articles,

- rewriting vague titles,

- improving topic hubs,

- removing low-value pages,

- cleaning redirected links,

- clarifying navigation labels,

- updating stale content,

- or improving internal paths between related pages.

This is not always glamorous work.

But it compounds.

A cleaner structure improves:

- crawl clarity,

- topical understanding,

- maintenance,

- analytics interpretation,

- and user trust simultaneously.

That is why it tends to outperform endless reactive content production over time.

AI search makes weak structure easier to expose

Some businesses are treating AI search like a completely separate discipline from technical SEO.

That is a mistake.

AI systems still rely on:

- accessible content,

- clear relationships,

- understandable structure,

- and trustworthy information.

A messy website creates fragmented context.

If your information is scattered across:

- duplicate pages,

- disconnected articles,

- weak internal links,

- vague headings,

- and outdated resources,

then you are giving both users and AI systems an inconsistent source.

Good structure creates stronger retrieval context naturally.

Not because AI search has magical new ranking rules, but because organized information is easier to interpret than chaos.

The fundamentals still matter.

Maybe more than ever.

One useful priority beats fifty random fixes

This is where many SEO workflows collapse.

Everything becomes urgent.

Every warning feels important. Every issue becomes a project. Every report creates another list.

Eventually nobody knows what actually matters anymore.

The better approach is usually:

- identify structural patterns,

- connect them to business value,

- and narrow the work to one practical priority at a time.

That might mean:

- fixing internal links to one key guide,

- consolidating overlapping service pages,

- improving one underperforming topic cluster,

- or updating pages that already have search visibility but weak clarity.

Momentum matters more than perfection.

Messy sites rarely become clean overnight.

They improve through repeated clarification.

What structure-first SEO decisions look like

Here are a few practical examples.

Example 1: Three pages target nearly the same topic

Weak response:

Publish another article targeting a related keyword variation.

Better response:

Consolidate overlapping content into one stronger page, redirect weaker URLs where appropriate, and rebuild internal links around the clearer topic structure.

Example 2: A page gets impressions but almost no clicks

Weak response:

Add more keywords.

Better response:

Review whether the page actually answers the implied query clearly. Improve titles, structure, internal links, and relevance before expanding content volume.

Example 3: Hundreds of pages are not indexed

Weak response:

Force indexing requests for everything.

Better response:

Determine which pages actually deserve indexing. Many low-value pages probably should not compete for crawl attention at all.

Example 4: Old articles still generate impressions

Weak response:

Ignore them and publish something new.

Better response:

Refresh pages that already have visibility signals before creating another weak article from scratch.

The SEO Perception perspective

Most real-world websites are messy.

Not because teams are lazy. Because websites evolve.

Businesses change direction. Products change. Services expand. Content accumulates. Priorities shift.

The problem is that very few workflows help teams simplify the mess intelligently.

That is the gap SEO Perception is trying to solve.

Not just:

“Here are 400 issues.”

But:

“Here is what matters next.”

Search Console reveals demand signals. Crawl data reveals structural reality. AI helps group and explain patterns. Today’s focus narrows the work into something practical.

That is a much healthier workflow than endless keyword chasing.

Final thought

When a website feels messy, the answer is usually not another content calendar.

It is clarity.

Cleaner structure. Better internal links. Fewer overlapping pages. Stronger topic relationships. Updated content. More obvious priorities.

Keyword research still matters. Content still matters.

But structure determines whether the rest of the work can actually compound properly.

A messy website does not always need more pages.

Sometimes it just needs to make better sense.

To continue after fixing structure, read Internal links are not housekeeping. They are how your site explains itself., Query fan-out means your content gaps are bigger than your keyword list, and You should not need to be a technical SEO expert to know what is wrong with your site.

Evidence and update policy

These articles are written from crawl diagnostics, Search Console interpretation, and cited public documentation when platform behavior is referenced. Guidance is updated when source platforms change materially.